Workflow · Integration testers

From a session to a regression in two clicks

Someone says “this endpoint used to return X.” You need to verify that claim, against three environments, and have a test file at the end of it.

Bowire records real calls across every protocol, replays them against any environment, and can turn the response payloads into Newman-style assertions that live right next to the request.

Capture the truth once

Click record, run your test scenario, click stop. Every call across every installed plugin ends up in one timeline — request, response, status, timing, metadata.

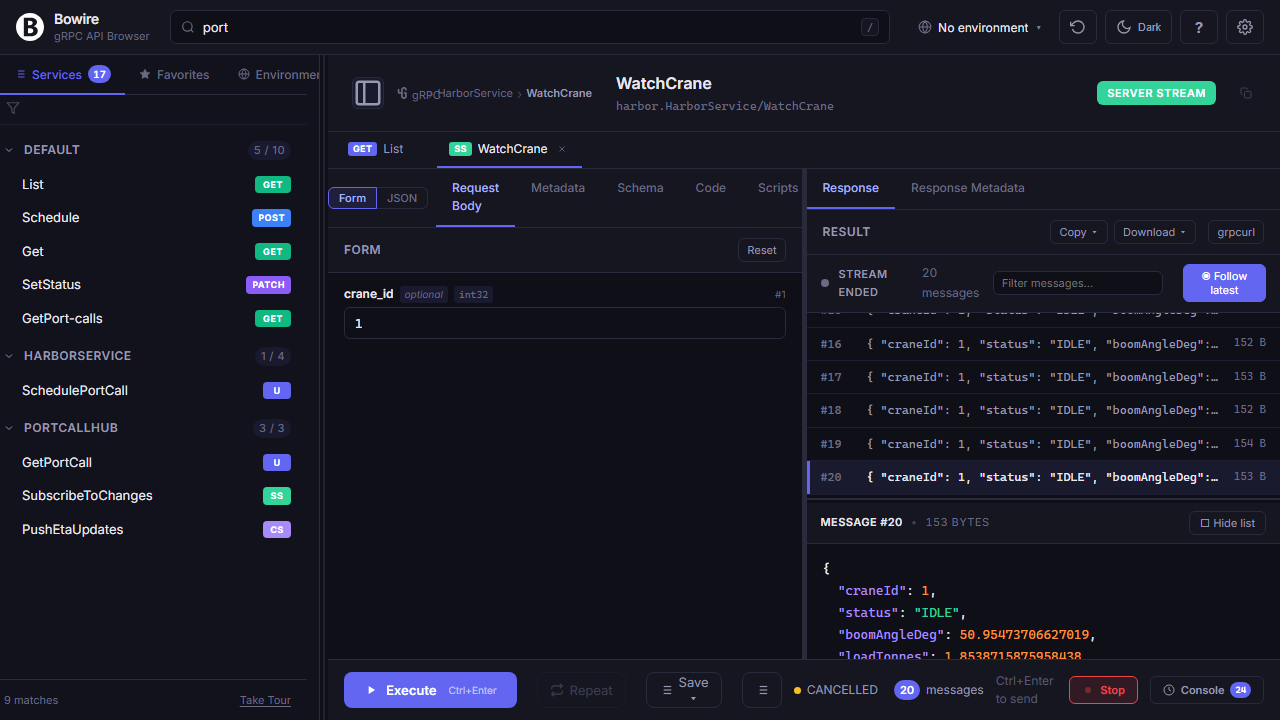

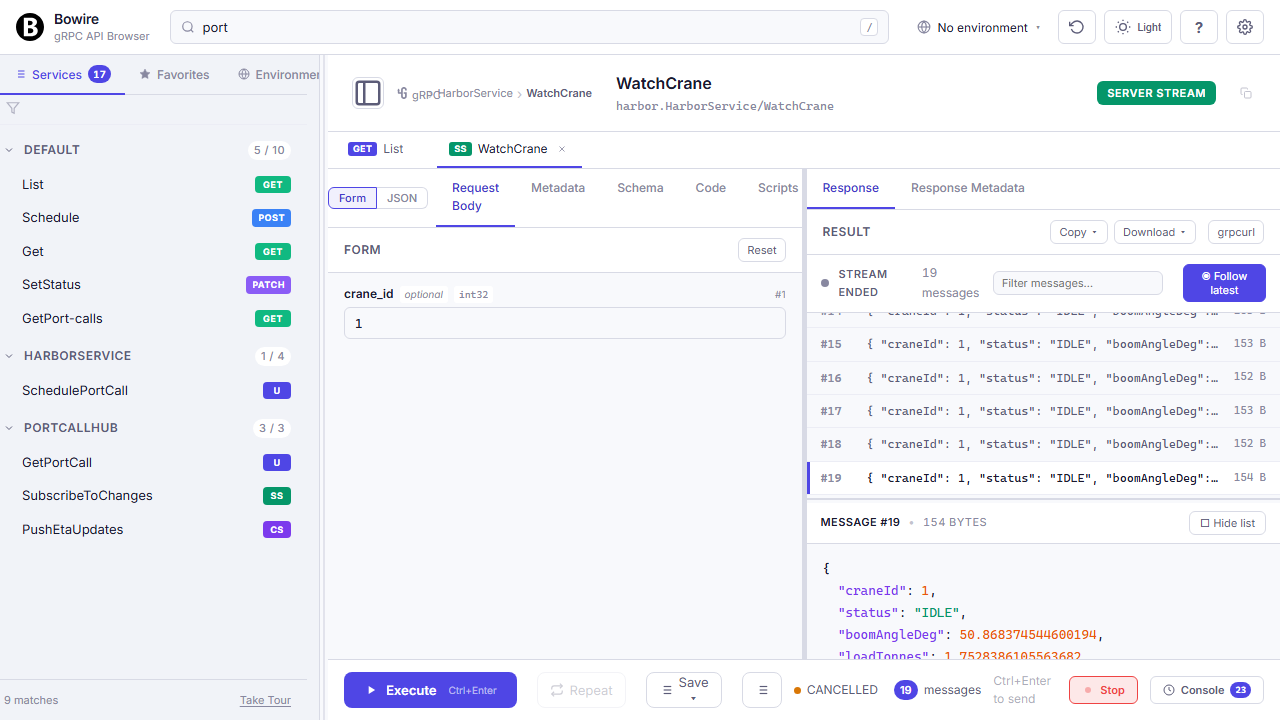

Record & replay — Postman Collection-Runner parity

gRPC streams, SignalR hub invocations, WebSocket frames, MQTT publishes, REST hits — all in the same timeline, all replayable.

Recordings are JSON files you can commit to the repo next to the service they test. Replay against Dev to check a dev-only regression; against Staging to confirm a release candidate; against Prod (read-only) to compare drift between the spec and what the live system actually returns.

Record & replay in detail →

Compare across environments

Environments aren’t identical. Bowire makes it obvious which variable drifted where.

Side-by-side environment diff

Pick any two configured environments, get a colour-coded diff of every variable and header. Catch the stale secret before it burns your afternoon.

Equal rows fold away, changed rows highlight, only-in-A / only-in-B rows are clearly flagged. Works across the envs you use in recordings (Dev/Staging/Prod) and the per-URL overrides that Bowire applies at request time via ${variable} substitution.

Turn it into a regression

A one-off capture becomes a repeatable check. Inline assertions run on every replay, CI or local.

Newman-style assertions — next to the request, not in a separate file

Eleven comparison operators, JSONPath-style targeting, pass / fail badges inline.

Pick a value in a recorded response, add an eq / gt / contains / matches / type check. Run the recording, every assertion lights up green or red inline. Export to HAR for the bug tracker, or drive the whole set from bowire call --recording in CI.

Test what’s actually shipped

Record once, replay everywhere, assert with the operators you already know.

Go to the recording docs